Dark Energy Spectroscopic Instrument (DESI)

DESI is mapping the three-dimensional positions of tens of millions of galaxies over five years, providing unprecedented constraints on the expansion history of the universe.

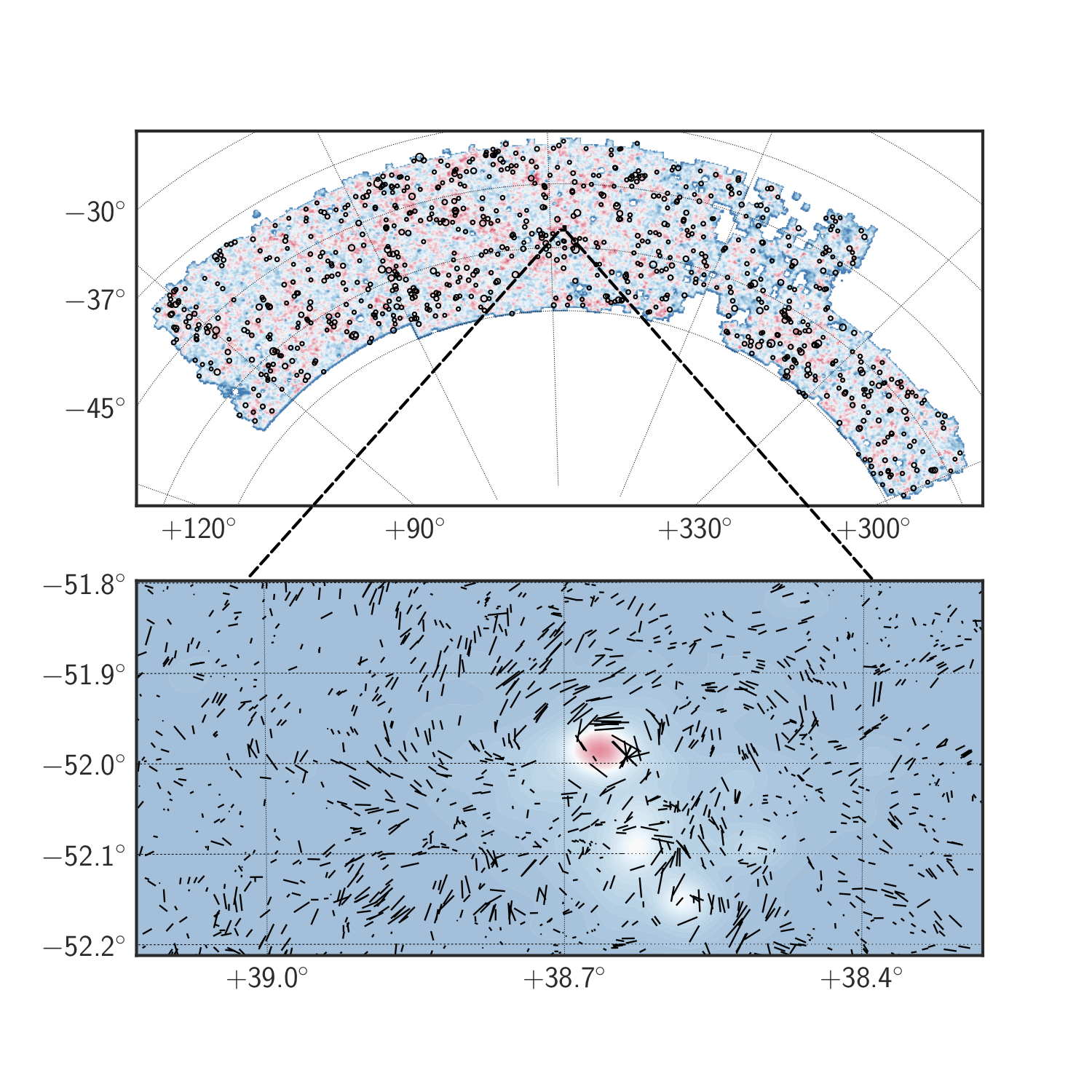

I'm particularly focused on cross-correlating weak-gravitational lensing—the subtle bending of light as it travels through the universe—with DESI galaxy positions. This combination provides a powerful test of gravity and dark energy on cosmological scales.

We demonstrated the potential of this approach in a recent analysis that cross-correlated DESI Early Data Release galaxy positions with weak lensing measurements from the Planck satellite, finding results consistent with general relativity and the standard cosmological model.

DESI is mapping the three-dimensional positions of tens of millions of galaxies over five years, providing unprecedented constraints on the expansion history of the universe.

I'm particularly focused on cross-correlating weak-gravitational lensing—the subtle bending of light as it travels through the universe—with DESI galaxy positions. This combination provides a powerful test of gravity and dark energy on cosmological scales.

We demonstrated the potential of this approach in a recent analysis that cross-correlated DESI Early Data Release galaxy positions with weak lensing measurements from the Planck satellite, finding results consistent with general relativity and the standard cosmological model.

The Dark Energy Survey (DES)

DES is a large-scale photometric survey spanning 5,000 square degrees that delivers precision cosmological constraints by measuring the weak gravitational lensing signatures imprinted on galaxy shapes by cosmic structures.

I have been heavily involved in many aspects of the cosmological analyses of the first three years of DES data, including the three-by-two-point correlation analysis (combining galaxy clustering, galaxy lensing, and cosmic shear).

The DES Y3 results represent some of the tightest constraints on the growth of cosmic structure and the properties of dark energy, with implications for fundamental physics at the largest scales.

DES is a large-scale photometric survey spanning 5,000 square degrees that delivers precision cosmological constraints by measuring the weak gravitational lensing signatures imprinted on galaxy shapes by cosmic structures.

I have been heavily involved in many aspects of the cosmological analyses of the first three years of DES data, including the three-by-two-point correlation analysis (combining galaxy clustering, galaxy lensing, and cosmic shear).

The DES Y3 results represent some of the tightest constraints on the growth of cosmic structure and the properties of dark energy, with implications for fundamental physics at the largest scales.

Hybrid Effective Field Theory

Historically, models for large-scale structure fall into two categories: perturbation theory-based approaches, which are analytically tractable and computationally fast but limited to quasi-linear scales; and simulation-based methods, which capture complex nonlinear physics but are computationally expensive and prone to noise from finite simulation volumes.

Along with collaborators, I developed Hybrid Effective Field Theory (HEFT), which combines the strengths of both approaches—leveraging simulation-calibrated perturbation theory to capture nonlinear effects accurately across a broad range of scales while maintaining analytical flexibility.

I released a public HEFT implementation that includes sophisticated treatments of massive neutrino effects, which is now actively applied to cosmological analyses in DESI, DES, and ACT collaborations, enabling tighter constraints on fundamental physics.

Historically, models for large-scale structure fall into two categories: perturbation theory-based approaches, which are analytically tractable and computationally fast but limited to quasi-linear scales; and simulation-based methods, which capture complex nonlinear physics but are computationally expensive and prone to noise from finite simulation volumes.

Along with collaborators, I developed Hybrid Effective Field Theory (HEFT), which combines the strengths of both approaches—leveraging simulation-calibrated perturbation theory to capture nonlinear effects accurately across a broad range of scales while maintaining analytical flexibility.

I released a public HEFT implementation that includes sophisticated treatments of massive neutrino effects, which is now actively applied to cosmological analyses in DESI, DES, and ACT collaborations, enabling tighter constraints on fundamental physics.

Zel'dovich Control Variates

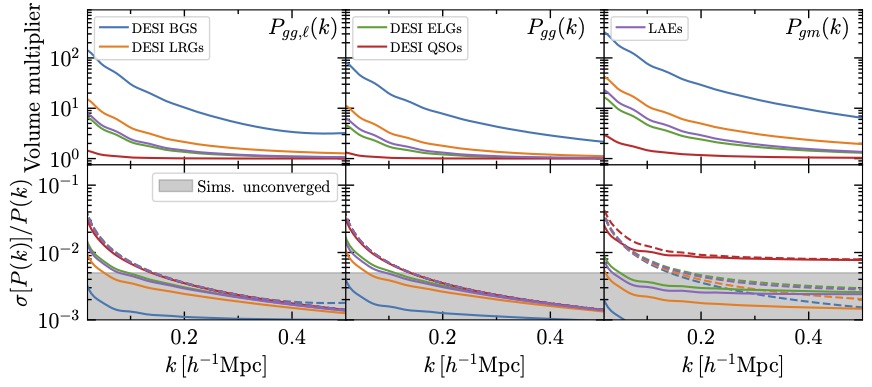

A major limitation of cosmological simulations is the statistical uncertainty arising from simulating finite volumes—we can only simulate small patches of the universe, introducing noise in our predictions.

My collaborators and I developed a theoretically near-optimal variance reduction technique based on control variates, a classical statistical method.

The key insight is surprisingly simple: we run a full cosmological N-body simulation alongside a fast Zel'dovich approximation—a linear theory where particles move in straight lines. Despite its simplicity, the Zel'dovich simulation is highly correlated with the full simulation, and we can analytically predict its statistics.

By pairing these simulations, we can remove much of the noise from the full simulation, dramatically improving the precision of cosmological predictions. This technique is already enhancing our DESI analyses and has broad applications across the field.

A major limitation of cosmological simulations is the statistical uncertainty arising from simulating finite volumes—we can only simulate small patches of the universe, introducing noise in our predictions.

My collaborators and I developed a theoretically near-optimal variance reduction technique based on control variates, a classical statistical method.

The key insight is surprisingly simple: we run a full cosmological N-body simulation alongside a fast Zel'dovich approximation—a linear theory where particles move in straight lines. Despite its simplicity, the Zel'dovich simulation is highly correlated with the full simulation, and we can analytically predict its statistics.

By pairing these simulations, we can remove much of the noise from the full simulation, dramatically improving the precision of cosmological predictions. This technique is already enhancing our DESI analyses and has broad applications across the field.

ML Accelerated Simulations

Cosmology is fundamentally a simulation-dependent field—our ability to test theories about dark energy and gravity hinges on comparing observations to realistic predictions from N-body simulations of cosmic structure formation.

However, running high-resolution simulations across the range of physical models we need to explore remains computationally expensive. Much of my work focuses on leveraging modern machine learning and data science techniques to accelerate and enhance simulations.

This includes developing surrogate models that can quickly predict simulation outputs, improving the realism of simulations to better match observations from surveys like DESI and the CMB, and enabling faster exploration of parameter space to constrain fundamental physics.

Cosmology is fundamentally a simulation-dependent field—our ability to test theories about dark energy and gravity hinges on comparing observations to realistic predictions from N-body simulations of cosmic structure formation.

However, running high-resolution simulations across the range of physical models we need to explore remains computationally expensive. Much of my work focuses on leveraging modern machine learning and data science techniques to accelerate and enhance simulations.

This includes developing surrogate models that can quickly predict simulation outputs, improving the realism of simulations to better match observations from surveys like DESI and the CMB, and enabling faster exploration of parameter space to constrain fundamental physics.

DESI is mapping the three-dimensional positions of tens of millions of galaxies over five years, providing unprecedented constraints on the expansion history of the universe.

I'm particularly focused on cross-correlating weak-gravitational lensing—the subtle bending of light as it travels through the universe—with DESI galaxy positions. This combination provides a powerful test of gravity and dark energy on cosmological scales.

We demonstrated the potential of this approach in a recent analysis that cross-correlated DESI Early Data Release galaxy positions with weak lensing measurements from the Planck satellite, finding results consistent with general relativity and the standard cosmological model.

DESI is mapping the three-dimensional positions of tens of millions of galaxies over five years, providing unprecedented constraints on the expansion history of the universe.

I'm particularly focused on cross-correlating weak-gravitational lensing—the subtle bending of light as it travels through the universe—with DESI galaxy positions. This combination provides a powerful test of gravity and dark energy on cosmological scales.

We demonstrated the potential of this approach in a recent analysis that cross-correlated DESI Early Data Release galaxy positions with weak lensing measurements from the Planck satellite, finding results consistent with general relativity and the standard cosmological model.

Historically, models for large-scale structure fall into two categories: perturbation theory-based approaches, which are analytically tractable and computationally fast but limited to quasi-linear scales; and simulation-based methods, which capture complex nonlinear physics but are computationally expensive and prone to noise from finite simulation volumes.

Along with collaborators, I developed

Historically, models for large-scale structure fall into two categories: perturbation theory-based approaches, which are analytically tractable and computationally fast but limited to quasi-linear scales; and simulation-based methods, which capture complex nonlinear physics but are computationally expensive and prone to noise from finite simulation volumes.

Along with collaborators, I developed  A major limitation of cosmological simulations is the statistical uncertainty arising from simulating finite volumes—we can only simulate small patches of the universe, introducing noise in our predictions.

My collaborators and I developed a

A major limitation of cosmological simulations is the statistical uncertainty arising from simulating finite volumes—we can only simulate small patches of the universe, introducing noise in our predictions.

My collaborators and I developed a  Cosmology is fundamentally a simulation-dependent field—our ability to test theories about dark energy and gravity hinges on comparing observations to realistic predictions from N-body simulations of cosmic structure formation.

However, running high-resolution simulations across the range of physical models we need to explore remains computationally expensive. Much of my work focuses on leveraging modern machine learning and data science techniques to accelerate and enhance simulations.

This includes developing surrogate models that can quickly predict simulation outputs, improving the realism of simulations to better match observations from surveys like DESI and the CMB, and enabling faster exploration of parameter space to constrain fundamental physics.

Cosmology is fundamentally a simulation-dependent field—our ability to test theories about dark energy and gravity hinges on comparing observations to realistic predictions from N-body simulations of cosmic structure formation.

However, running high-resolution simulations across the range of physical models we need to explore remains computationally expensive. Much of my work focuses on leveraging modern machine learning and data science techniques to accelerate and enhance simulations.

This includes developing surrogate models that can quickly predict simulation outputs, improving the realism of simulations to better match observations from surveys like DESI and the CMB, and enabling faster exploration of parameter space to constrain fundamental physics.